AI-Generated Résumés: What Employers Need to Know Now

If it feels like you suddenly have a lot more “qualified” applicants, you’re not imagining it.

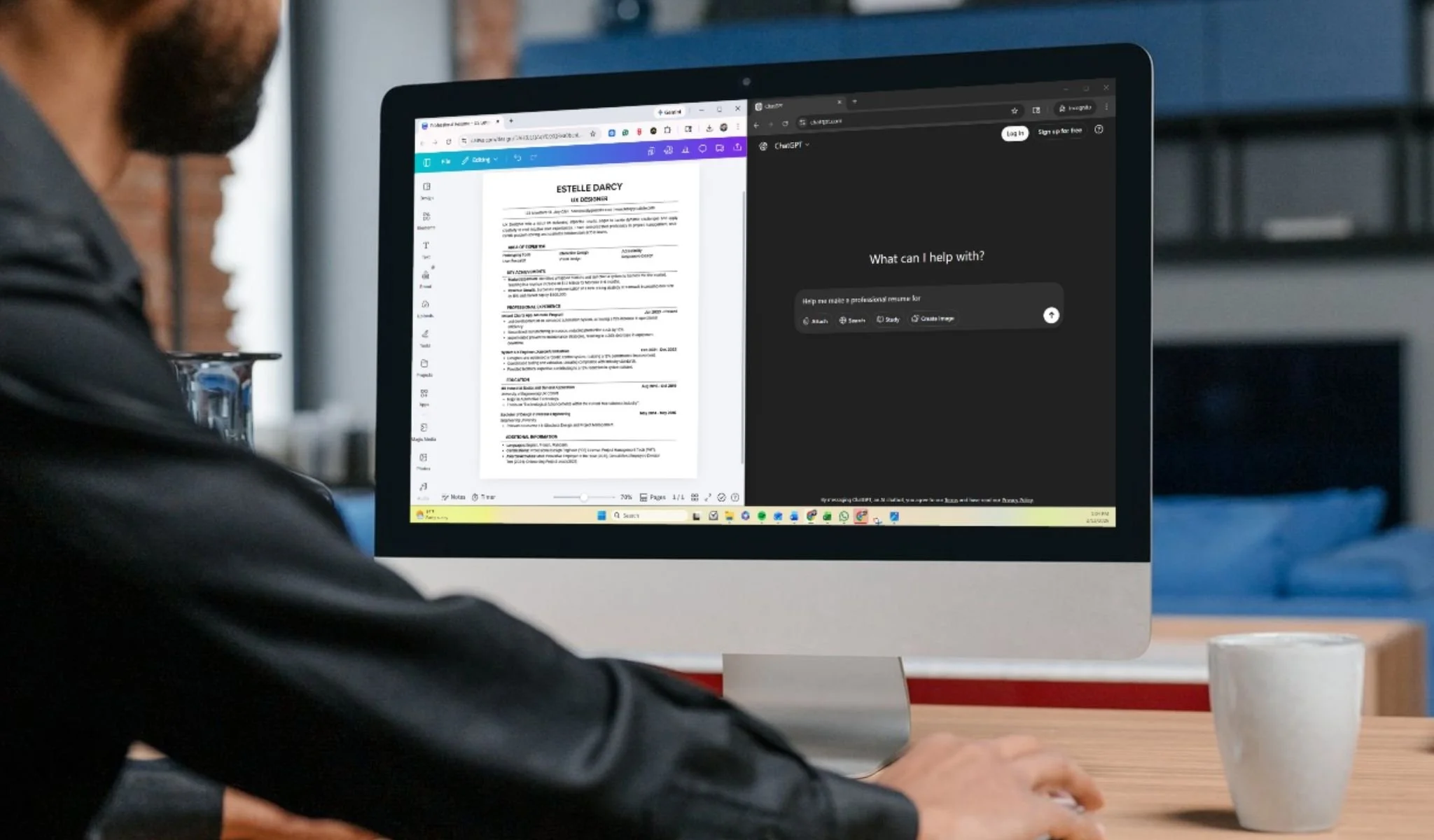

Generative AI has fundamentally changed how candidates apply for jobs. Résumés can now be produced, tailored, and submitted in minutes, often at scale. Employers, in turn, are leaning harder on automation to manage volume.

The result is a hiring process with more activity and less clarity.

This is not a moral debate about whether candidates should use AI. The underlying truth about capability hasn’t changed. What has changed is how easy it is to look impressive on paper.

When everyone can sound polished, polish stops being a reliable signal.

For CEOs, HR leaders, and hiring managers, the real question isn’t whether ChatGPT-generated résumés are “good” or “bad.” The real challenge is this: how do you make sound hiring decisions when anyone can generate a polished résumé that mirrors the job description? How do you distinguish real experience from candidates who are simply skilled at using AI?

You’re already hiring in an AI-driven market

AI has been part of hiring for years through applicant tracking systems and keyword screening. What’s changed is the sophistication and accessibility of generative tools. Candidates can now optimize language, structure, and alignment to job descriptions with little effort, while employers use increasingly advanced tools to scan, rank, and filter applications.

This has led to a kind of arms race. More people apply, so screening gets tougher. Candidates try even harder to stand out. Hiring teams feel overwhelmed, and it takes longer to fill jobs. This mirrors a broader concern many leaders are already navigating: technology is changing faster than people and processes can keep up, as we discussed in our FOBO article on upskilling and reskilling.

Recent commentary from Harvard Business Review describes this clearly: AI has made hiring noisier and more complex, even as it holds potential to improve outcomes when used intentionally.

Where AI-generated résumés can actually help employers

It is important to separate the frustration with volume from the legitimate benefits that AI can offer.

Clearer communication. Not all strong performers are strong writers. AI can help fix grammar, formatting, and clarity issues that do not reflect job skills. This can keep good candidates from being rejected for small mistakes.

Better translation of experience: Many candidates struggle to articulate their impact in the way employers expect. When used well, AI can help them describe their experience more clearly, making it easier for hiring teams to assess if they are a good fit.

Improved accessibility: For people who are not native English speakers, those with disabilities, or those returning to work, AI can help level the playing field by improving how they communicate, without changing their real qualifications.

When AI is used as a polishing and clarification tool, employers often benefit from more readable, more comparable résumés, though Inc. notes that the same tools are also driving application volume and résumé sameness at scale/

Where AI-generated résumés create real risk

Most employer concerns are not theoretical. They show up in day-to-day hiring challenges.

As more people apply, it becomes harder to spot the best candidates.

When it becomes easy to apply everywhere, pipelines fill with candidates who look plausible on paper but are not the right fit. Recruiters get overwhelmed, decisions take longer, and top candidates can get lost in the noise.

Candidates start to sound the same.

Generative AI favors safe, generic phrasing. Unique qualities and good judgment are harder to spot, so résumés become less useful as a differentiator.

Keyword optimization accelerates.

Candidates optimize for what they believe systems are filtering for. This can inflate perceived alignment and make candidates seem like a better fit than they really are.

Misrepresentation becomes easier.

There is a big difference between polishing language and inventing experience. AI lowers the barrier to crossing that line, intentionally or not.

Legal and compliance accountability remains with the employer.

Using AI in screening or selection does not remove responsibility. Employers remain accountable for bias, disparate impact, and fairness under existing employment laws, as outlined in current EEOC guidance on AI and employment decisions.

The wrong question: AI versus human

Let’s be clear: the résumé has always been a marketing document. AI didn’t change that. It simply made it easier to produce a convincing one.

The better question is:

Are we designing our hiring process to measure what matters, or are we being influenced by what looks good?

Once you answer that honestly, the conversation shifts from debating résumés to redesigning how you evaluate talent in an AI-shaped market.

A practical employer approach for hiring in an AI-polished world

Set expectations early

You do not need a long or detailed AI policy. What you need is clear guidance.

A practical standard many organizations are adopting is simple:

Candidates may use AI to improve clarity, grammar, and formatting.

Candidates should not overstate substance, accomplishments, or examples.

Candidates should be prepared to discuss and defend everything they submit.

This is not about strict enforcement. It is about setting clear expectations and protecting decision quality.

Build evidence into the process early

Anyone who has been in the hiring seat long enough will tell you: the résumé alone should never determine who gets hired. It is an introduction, not proof.

In an AI-polished market, this matters even more. If presentation quality is no longer a reliable differentiator, your process must surface evidence quickly.

Instead of treating the interview as the first real test, add a light but meaningful proof point early in the funnel:

A short, role-specific work sample tied to real business challenges

A brief written response to an actual scenario your team has faced

A structured screening conversation focused on decisions made, tradeoffs considered, and measurable outcomes

This does not need to be burdensome or complex. It simply shifts the emphasis from how well someone can describe work to how clearly they can think about it.

AI can help someone write about doing the work. It cannot reliably help them explain their work, defend their choices, or think in real time during follow-up.

Interview for ownership, not vocabulary

Well-polished résumés make it easier to be impressed. Strong interviews focus on substance.

Effective questions explore:

What the candidate chose not to do, and why

What broke or surprised them

How impact was measured

What they would change if given another chance

Specificity reveals capability. Generalities usually do not.

As noise increases, structure becomes a competitive advantage. Using consistent questions, defined scorecards, and real calibration conversations helps teams evaluate candidates more fairly and explain their decisions — especially when automation is involved in earlier screening stages.

Structure doesn’t slow hiring down. It protects decision quality.

Control the Funnel

When application volume spikes, adding more filters is tempting. Sometimes the better move is narrowing who can apply in the first place:

Require a short, role-specific response

Limit one-click applications for critical roles

Rely more on targeted sourcing and referrals where quality matters more than speed

AI made applying easy. Your process should make proving fit harder.

What hiring leaders should take away

A ChatGPT-generated résumé is not automatically a red flag.

It is a reminder that looking polished is easy. Proving capability is not.

The organizations that will hire well in this environment treat résumés as introductions, not evidence. They design processes that surface real capability early, interview for judgment and ownership, and use AI thoughtfully without outsourcing accountability.

The goal is not to eliminate AI from hiring. It is to ensure technology sharpens decisions instead of clouding them.

You cannot slow the technology down. But you can raise the standard for how you evaluate talent.

That is the difference between reacting to change and leading through it.